I copied around 30 of these mp3’s to a folder, however later realised that they still needed a bit more auditing - files needed to start exactly on the first beat, and needed to not get out of time throughout the song under the assumed BPM. I have not had Traktor installed for a while, but a lot of the mp3 files in my music collection still have the Traktor-detected BPM stored with the file. Traktor is a DJing program, which is quite capable of detecting the BPM of the tracks you give it, particularly for electronic music. The main training data was obtained from my Traktor collection. My intuition behind the inclusion and order of specific network layers is covered further below. So the current architecture is essentially a convolutional neural network. After switching to Keras, I also added a convolutional layer.

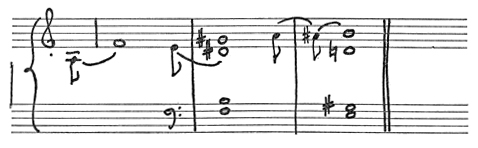

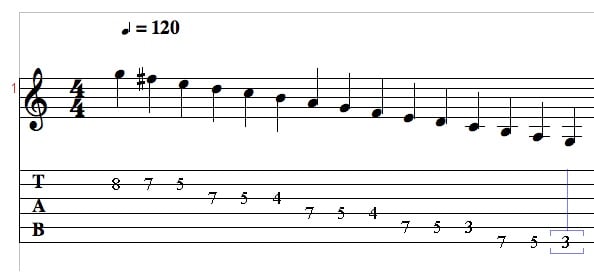

I soon discovered the magic of Keras however, when looking for a way to apply the same dense layer to every time step. My initial architecture involved just dense layers. This image overlays the target output pulse vector (black) over the input frequency spectogram of a clip of audio. This might be useful, for instance if we wanted to synchronise two tracks together. As a bonus, the network will also (hopefully) tell us where the beats are, in addition to just how often they occur. We can relatively easily infer BPM from this vector (though its resolution will determine how accurately). We could then create our target vector by setting (zero-indexed) elements of this vector to 1 (as 120 BPM implies 2 beats per second). Then say the tempo was 120 BPM, and the first beat was at the start of the clip. We might represent this by a vector of zeroes of length 200 - a resolution of 100 frames per second. I achieved this by constructing what I call a ‘pulse vector’ as follows: The BPM could then be inferred from this. Then I decided I could save the network some trouble by having it try to predict the location of the beats in time. First I thought I might try predicting the BPM directly. Output data format (to be predicted by the network) Note the kick drum on each beat in the lowest frequency bin. The values (pixel colour) then indicate the intensity of the audio signal at each frequency and time step.Īn example frequency spectogram from a few seconds of electronic music. These basically contain time on the x-axis, and frequency bins on the y-axis. I figured a frequency spectogram would serve as an appropriate input to whatever network I was planning on training. I don’t know a whole lot about the physics side of audio, or frequency data more generally, but I am familiar with Fourier analysis, and spectograms.

One of the first decisions to make here is what general form the network’s input should take. Initially I had to throw around a few ideas regarding the best way to represent the input audio, the BPM, and what would be an ideal neural network architecture. After a small experiment a while back, I decided to make a more serious second attempt. I have always wondered whether it would be possible to detect the tempo (or beats per minute, or BPM) of a piece of music using a neural network-based approach. Detecting Music BPM using Neural Networks

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed